June 28, 2008

Previously, I introduced my theory of the Difficulty Divide. It’s a concept that I’ve used for several years when talking about why I use Linux, and why some people may give up on it. I also promised that I would present on how I’ve modified it in recent years to reflect the current state of things. Before I do that though, I think some clarification is in order.

I really appreciated the comments that were left on the previous article, both the nit-pickers and hole-pokers and the people who rebutted them on my behalf. Two-way discourse like that is the best way to refine models like this, especially when they are based primarily on intuition and in the field experiences rather than formal study. Based on those comments, I realized that I failed to make one very important aspect of the Difficulty Divide concept clear.

The representation that I presented is meant to be a visualization rendered from the point of view of a “typical” Windows user, on the verge of starting out in using Linux. Several readers pointed out that as a user’s skill in Linux increases, or if they have no Windows-centric habits, the relative position of the curves would be different, giving Linux a larger advantage. In essence, as one’s knowledge of Linux increases (or knowledge of Windows decreases?) the whole curve for Linux slides down, making the divide smaller. This is almost certainly true. I seem to recall Novell sponsoring some usability studies that support this argument, but I am unable to find a link to the studies now. If any of you can find one, please leave it in the comments below! This is interesting in part because it implies that in most cases, the “floor” of the cost of Linux is defined almost exclusively by one’s own skills. That in turn implies that given enough skill, the hard cost of using Linux day-to-day can begin to approach zero, even as more tools are required to complete new tasks. Under proprietary systems, particularly ones with predominantly proprietary tools and recurring costs, this is unachievable.

This then is related to the tendency of people to make leaps up the difficulty axis, which prevents the natural “shrink” of the divide. Making a jump to a task that really is more difficult than their knowledge level would permit is often what gets them caught in the divide. They are used to having enough foundational knowledge that they are able to complete “Task X”, and when they cannot, they blame the software rather than their lack of foundational knowledge. This is becoming more and more common as the idea that computers should always be easy and never require any sort of effort to use becomes more commonplace. In Windows this is compensated for by years of informal learning, and exacerbated by the “Dummies” books and their imitators.

By providing explicit lists of steps for various tasks, these books allow people to complete work that should be over their heads by “removing” the need for foundational skills. Of course, this is ultimately a disservice to the users because it makes them dependent upon their lists to continue working at the level they expect to, but it is sound marketing and makes the perceived cost of the platform lower. I must concede too that it buys the users time, allowing them to become productive without the up-front investment required to gain foundational skills. However, most users never make that investment, and they spend all their time limited to the tasks laid out in their lists, constantly attempting to be productive with expectations that are beyond their actual skill. This is somewhat equivalent to how many people manage their money. Rather than building a foundation of capital, they live paycheck to paycheck and manage to get by ok for the most part. However, if something unexpected happens, they lack the means to handle it. They will often require quite a lot of assistance to get back on their feet. I really see the “Dummies” books as the computer skill equivalent of a pay-day loan. Does that mean mean we need “Dummies” books for Linux or should they be avoided? I’m sorry, I’m getting off-topic…

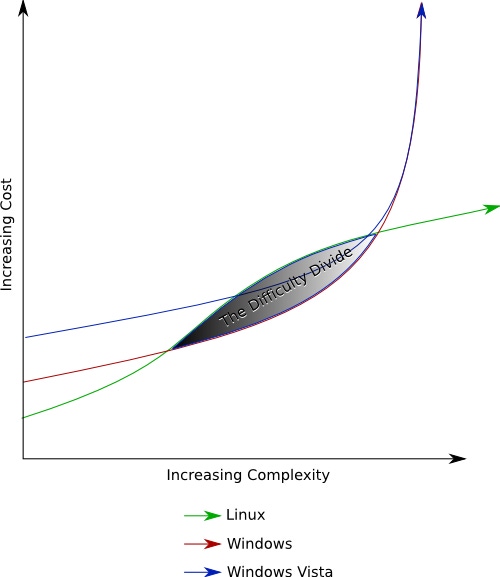

There are three major differences between this graph and the one presented previously. The initial slope for Linux is flatter, the relative position of Linux and Windows is shifted, and we have a third line that represents Windows Vista.

There are three major differences between this graph and the one presented previously. The initial slope for Linux is flatter, the relative position of Linux and Windows is shifted, and we have a third line that represents Windows Vista.

Thanks to all of the effort that has been put into improving the desktop Linux experience in recent years, particularly as embodied by Ubuntu, getting started in Linux is easier than ever. GUI tools are more complete, and “typical” entry level tasks are simpler and more discoverable. Thanks also to the rise of computers that come pre-loaded with Linux, one of the historically most difficult tasks associated with using Linux, installing it, has potentially become a non-issue. This progress is represented by the “Inverse Difficulty Divide” at the far left of the graph, where the cost of Linux is lower than Windows, and by the proportionately smaller Difficulty Divide. It is to the point where many tasks are less costly to complete in Linux than in Windows. This is largely a function of the similar or better level of discoverability or “intuitiveness” of the software and the lower hard costs. One example of this is burning an ISO cd image. In Ubuntu, this requires exactly three clicks of a mouse and no software beyond what is available out of the box. With Windows, this is impossible without third-party tools. In some cases those tools come pre-installed, but that does not necessarily mean they are free of cost. Often they are demo versions or are ill-conceived custom applications. Sony is particularly guilty of creating poor-quality bundled software rather than licensing something that is well done. Even though there is at least one quality free tool available that will complete this task (Infrarecorder) one still must invest the time required to discover and locate it to be able to use it. All of that creates a cost level higher than that in Linux. And even with the tools available, I have yet to find any that match the ease of the Nautilus “Write to Disc…” context-menu. There are still some cases where “typical” tasks are less costly on Windows than on Linux, but I believe they have gotten to be the exception rather than the rule, and they generally fall in the middle-ground of complexity where there is some expectation of cost, regardless of the platform being used.

Vista introduces an interesting new variable to this model. By making so many seemingly arbitrary and misguided UI changes, breaking compatibility with many tools, and delivering such poor performance on all but the most high-end hardware, Microsoft has made the perceived cost of Vista much higher than that of XP. This arguably negates whatever architectural or security improvements it may contain and also makes the harder tasks even more costly because whole new sets of support software and skills are likely to be needed. This presents a golden opportunity for Linux. For someone looking to upgrade from Windows XP, regardless of the reason, the total initial cost of moving to Linux is likely to be smaller than moving to Vista. This is the first time that I know of where the opportunity cost of moving to Linux is potentially lower than that of sticking with Windows. This opens the door to realizing the long-term savings offered by working within an open and enabling platform to a group of people who were unlikely to have even considered it previously. Of course, there will be some people whose definition of usability includes tasks for which no quality tool exists in Linux. There will always be corner cases where one platform or another will be at a disadvantage, but for the common case of a “normal” Windows XP user, there is a strong argument for making the switch.

After the clarifications and modifications above, does the Difficulty Divide still hold up? Is it more or less valid? What further tweaks does it need? I enjoyed watching the discussion unfold last time, and I made a conscious decision to stay out of it so as not to show my cards, but now I plan to be fully involved!

About the Author(s)

You May Also Like