Cloud Computing News & Updates

Welcome to our Cloud News & Updates page, designed for channel partners, advisors and tech vendors. Stay informed on the latest trends, services, and innovations in cloud technology. Explore breaking news, cloud market analysis and expert insights to empower your business and better serve your clients in the dynamic cloud computing landscape.

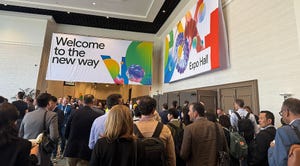

AWS Partner Summit London 2024 Takeaways

Cloud Computing News & Updates

AWS Partner Summit: 5 Biggest TakeawaysAWS Partner Summit: 5 Biggest Takeaways

It was generative AI all the way at AWS Partner Summit London – but what were the biggest talking points?